Pillar has assembled the world's brightest minds from military intelligence and enterprise security to dismantle emerging threats in the new AI landscape. Our team’s expertise fuses deep offensive roots in traditional security (AppSec, Cloud, OS, Malware) with Frontier AI disciplines (Data Science, AI, Machine Learning). This hybrid DNA allows us to deconstruct complex attacks that cross the boundary between code, infrastructure, and autonomous systems.

Adversarial AI Research

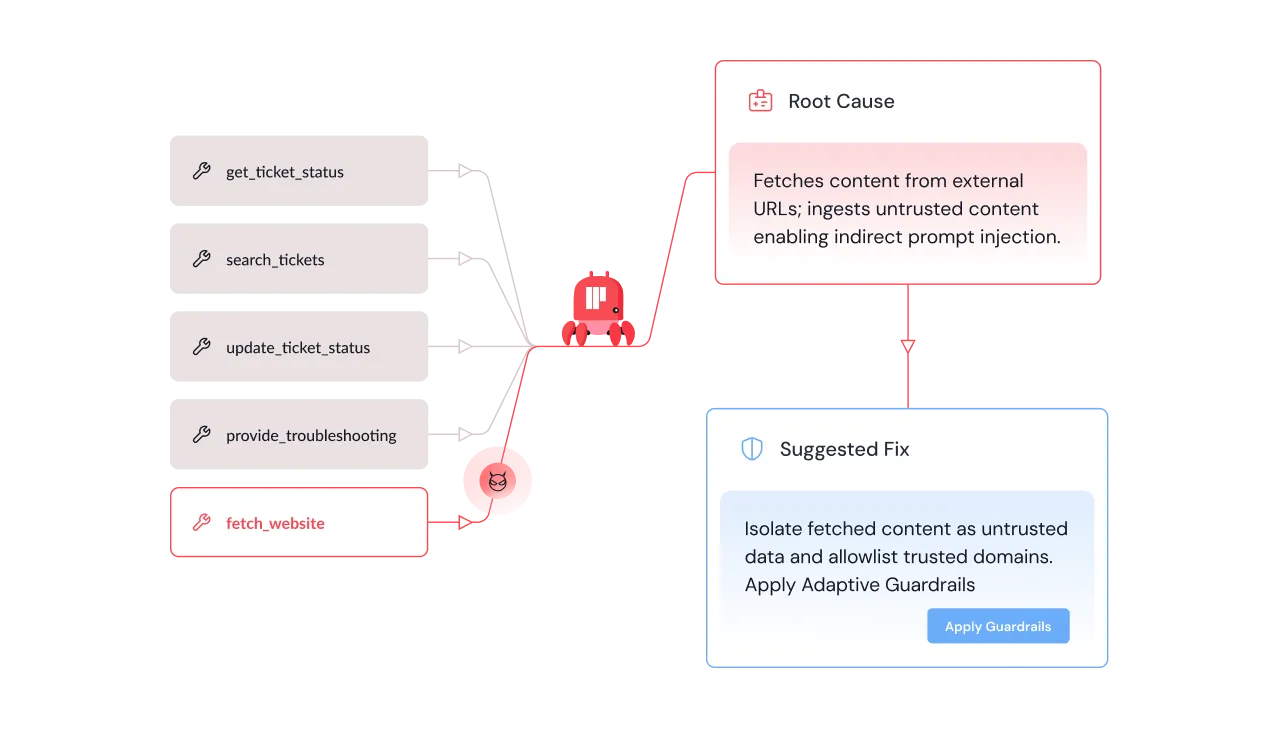

Dissects Foundation Models and agents to identify zero-day vulnerabilities, novel jailbreaks, and prompt injection techniques.

Threat Intelligence

Monitors the wild for emerging trends in how attackers are weaponizing AI, delivering proactive insights rather than reactive alerts.

Red Teaming Operations

Simulates sophisticated attacks on AI pipelines to validate defenses and expose logic gaps that standard tools miss.

Architecting Enterprise Defense

Translates research into product capabilities, building next-gen features like the Safe MCP Registry and integrated Threat Intel feeds to secure the future of AI work.

Open Source & Supply Chain Security

Actively hunts for vulnerabilities in the open-source AI ecosystem—from coding agents to model hubs—to harden the community tools that enterprises rely on.

Our research team have identified & reported security vulnerabilities in the most popular coding agents, IDEs, model hubs and agentic workflow platforms.

What we discovered

Pillar Security researchers have uncovered a vulnerability in Antigravity, Google's agentic IDE. This technique exploits insufficient input sanitization of the find_by_name tool's Pattern parameter, allowing attackers to inject command-line flags into the underlying fd utility, converting a file search operation into arbitrary code execution.

.png)

.png)

By injecting the -X (exec-batch) flag through the Pattern parameter, an attacker can force fd to execute arbitrary binaries against workspace files. Combined with Antigravity's ability to create files as a permitted action, this enables a full attack chain...

Meet the Pillar Research Team

Schedule a deep dive with the Pillar research team to learn about our latest research and findings. Get direct access to our experts and hear about the most novel attacks we are seeing in the wild

.png)

.png)

.png)

.png)

.png)